Anyone with a computer and $25 can play with SDR. You can listen to and watch radio signals: AM, FM, shortwave, public safety, airplanes, satellites, even phones. An example of cheap SDR is the USB dongle described in a recent article.

What is SDR radio? SDR stands for software defined radio. This means a radio whose functions and capabilities are defined by software rather than hardware. Here’s how that happened.

There were two key developments that led to SDR radio. The first is digital signal processing, or DSP. The second is SpeakEasy. More on that later.

For more than a century, engineers have used mathematics to analyze and improve the performance of audio, radio and other electronic circuits. They still do. Although today the mathematics might take the form of a simulation rather than just a formula. All electronic devices, passive and active, can have their performance characterized by math equations. Many of these equations arose from theory, many from experimentation. In effect, they came up with recipes that manipulated signals through physical devices like amplifiers. These physical devices in turn were made from components like tubes, transistors, diodes, capacitors, coils, resistors and so on.

When computers came along, someone got a bright idea. Since we understand the mathematics of signals and circuits, why not forget about the hardware and just use the computer to process signals? Why not, indeed. Since computers are digital, they need data rather than signals. By sampling it very quickly, an analog audio or radio signal can be converted into digital data. Once converted, the computer can do stuff with the data to amplify the signal or reduce noise. Then the computer can convert data back into an analog signal and play it through a speaker. This is exactly how your CD, DVD or iPod works for music and video. The process of sampling signals and converting them into data is called Analog to Digital conversion, or ADC. The process of turning data back into sound is called Digital to Analog Conversion, or DAC.

Ok. So at this point in SDR radio story, we have something that might be called “software radio”, where we convert radio signals into data and use software to do DSP on those signals. But SDR radio includes the idea that software defines the radio? What does that mean? That’s were the SpeakEasy project comes in.

SpeakEasy was an American military (DARPA, USAF) project during the 1990’s which revolutionized radio architecture. The Air Force wanted one radio that could be re-configured into 10 different types of radio using software. DARPA wanted a standard, open architecture through which many different contractors could make contributions. Costs could be reduced and flexibility increased. Increasingly, the complexities of radio would be shifted to software. Long story short, SDR has become the new normal.

How does SDR work?

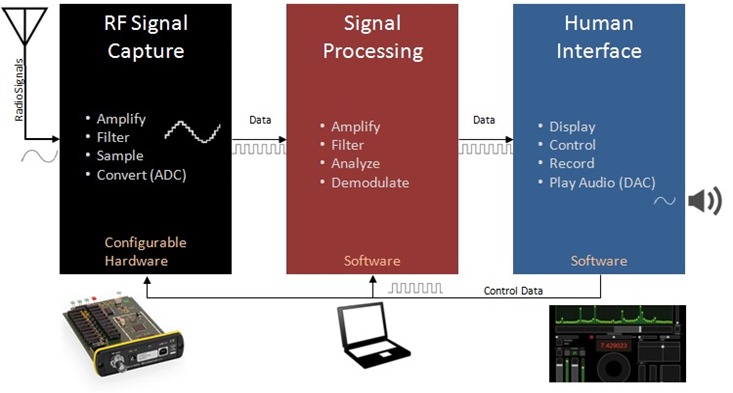

There are basically three parts to SDR, shown in the graphic above. These are signal capture, signal processing and human interface. They are all separate and configurable, and linked by data.

Signal Capture. The SDR radio process starts with a piece of hardware that captures, amplifies, filters signals, and then converts them into bytes of data. The $25 RTL-SDR radio dongle is just such a device. So are more capable devices like the $1,000 Microtelecom Perseus, shown above. Most of these signal capture devices are firmware or software configurable to do different types of sampling at different speeds or bandwidths. They can cover a very broad range of spectrum; the original Speakeasy specification was for 2Mhz to 2GHz coverage.

There are two factors to consider in signal capture. The first is filtering, or narrowing the range of spectrum that gets amplified and converted, in order to prevent overloading and unwanted mixing of signals. So, for example, the Perseus covers 40 MHz of radio spectrum with ten band-pass filters and switchable attenuators. On the other hand, the much cheaper RTL-SDR radio dongles cover 1,000 or 2,000 MHz of spectrum with almost no filtering at all.

The second factor to consider in signal capture is the analog to digital conversion (ADC). This is a combination of sampling rate and data size. Sampling rate concerns how much of the spectrum that can be sampled and converted to data. Data size, the number of bits of data per sample, determines dynamic range. Dynamic range describes how wide a range between the strongest and weakest signals being heard can be handled by the data. So, for example, the RTL-SDR samples typically at a rate of 2,500,000 samples per second, and generates 7-8 bit data from its ADC. On the other hand, the Perseus samples at 80,000,000 samples per second and generates 14 bit data. This means that the Perseus has a more than sufficient dynamic range of around 100dB, while is RTL-SDR is half of that. Fascinating, when you realize that the RTL-SDR ADC must convert signals into numbers that can only range from –127 to +127! And yet, it flies.

Signal Processing. You basically use your PC as your radio. Its performance is only limited by the nature of the data and the power of your PC. SDR radio is about moving millions of samples of data through your PC every second, and doing math on the data. Signal processing is either done using time-domain data (which is how the data enters your PC) or frequency-domain data requiring a transformation called the FFT. Early amateur SDR actually converted radio signals to baseband, and used the PC sound card to sample the data in small bandwidths. Today, there are many free and open source programs that you can use to process the data collected by you signal capture unit. These include SDR#, HDSDR and my favorite, SDR Console V.2 by Simon Brown. All three of these programs support RTL-SDR, while the last two will work with many signal capture boxes including Perseus.

Human Interface. While digital signal processing is the SDR engine under the hood, most software combines signal processing and a human interface or GUI. The human interface innovation in SDR software is the ability to display a frequency spectrum, in addition to providing virtual controls for the radio. Some programs also include the ability to decode special digital modes such as Morse code, teletype, PSK and so on. In some cases, the software is a non-commercial version of proprietary systems used by industry and for signal intelligence. Others are built by open-source communities. SDR# for example has an open architecture that enables and encourages the contribution of “plug-ins” to add extra functionality.

A final word of explanation about “I/Q data” used by most SDR. I/Q stands for In-Phase and Quadrature signals. This simply means that the signal capture unit outputs two different streams of signal data, each one 90 degrees out of phase with the other. I/Q data is fairly straightforward to produce, and it enables a lot of mathematical magic to be performed. Processing digitized signals using I/Q data enables better, faster and more advanced processing. It’s sort of like making recorded music sound better by using stereo, or seeing further using binoculars. Most of the advanced forms of data, voice and video modulation are based on the mathematics underlying I/Q data. And, unless you want to write your own SDR software, that’s probably all you need to know about this subject!

By the way, writing your own SDR software is not that difficult. I have actually written my own software radio. It requires only an intermediate level understanding of the mathematics, and you can use pre-developed signal processing libraries like Intel Performance Primitives (IPP) to make coding easier. But, that’s a story for another article.